1

1 1

1

JINKUSU CAM is a real‑time face‑and‑voice deepfake kit that can feed manipulated video and audio directly into on‑boarding checks, and if its low‑latency realism becomes routine, traditional liveness checks will no longer be a reliable gate for major exchanges. That condition turns the problem from a vendor misconfiguration into an operational imperative: exchanges, KYC vendors, and regulators must adopt multi‑modal detection, emulator and virtual‑camera hardening, and cross‑platform forensic signals to avoid systemic risk.

The tool chains GPU‑accelerated face‑swapping (notably InsightFace frameworks), real‑time voice modulation, and virtual camera outputs so altered streams are accepted as live input by desktop and mobile verification apps. Because it can run through Android emulators, attackers can automate on‑device flows that mimic genuine mobile onboarding sessions used by Binance, Coinbase, Kraken, and OKX.

That combination—high‑fidelity face swaps, camera‑injection, and synchronized voice—matches the World Economic Forum’s Cybercrime Atlas warning: low‑latency, high‑fidelity swaps plus camera injection create the greatest KYC exposure. The margin for detection is shrinking as temporal synchronization errors and lighting mismatches become subtler with more compute and better models.

Multi‑modal defenders analyze different signal classes: micro‑facial dynamics, voice spectral and prosodic features, metadata (device IDs, emulator fingerprints), and behavioral profiles over sessions. Vendors such as Authenta AI report sustained live detection accuracies above 94% by fusing those signals and using continuous learning to adapt to new generators.

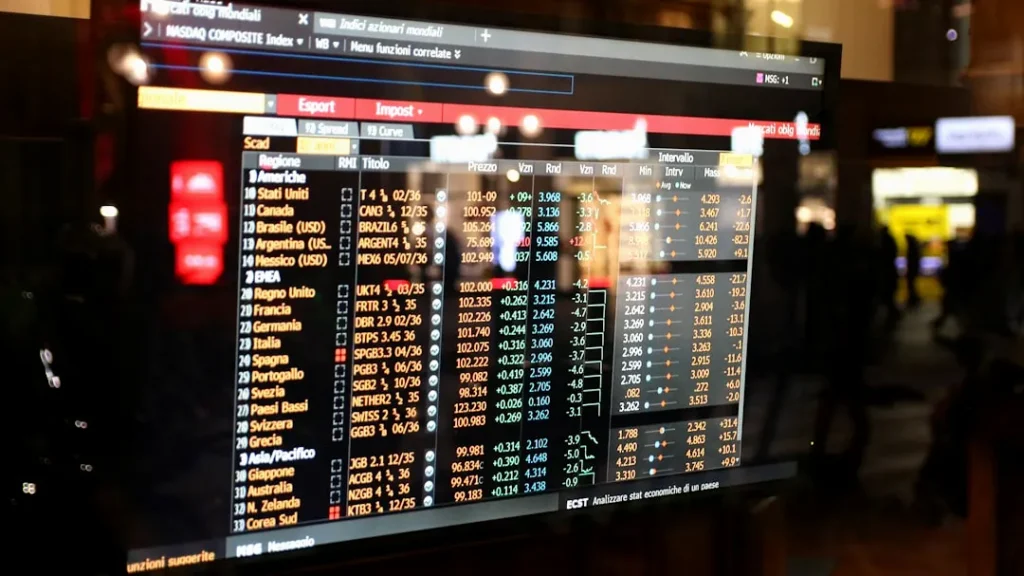

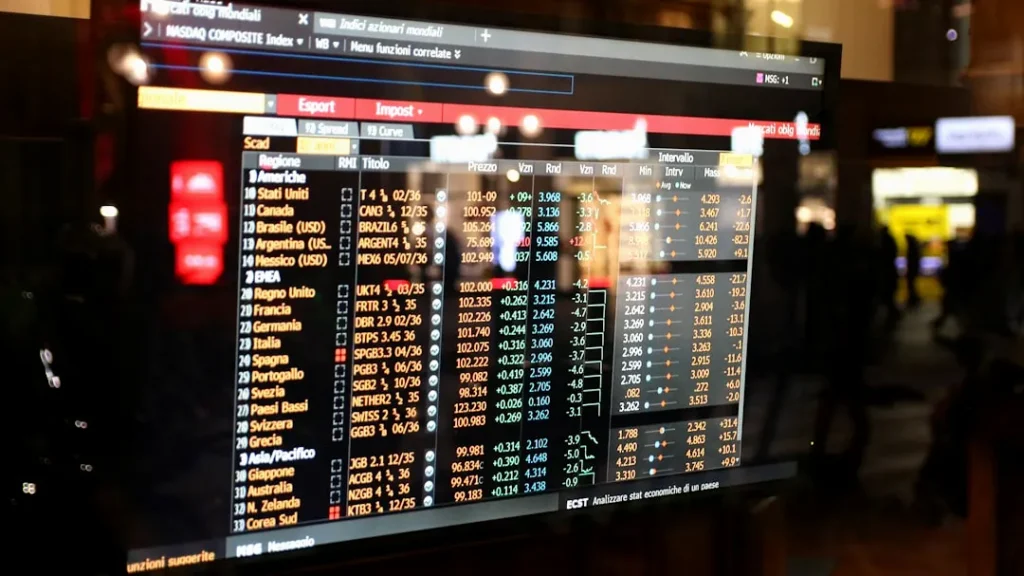

| Attacker Technique | Effective Defender Signal | Practical Weakness / Threshold |

|---|---|---|

| GPU face swap with InsightFace + virtual camera | Micro‑expression analysis & frame‑level consistency checks | Breaks when latency >20–50ms or lighting inconsistent across frames |

| Real‑time voice changers, pitch modulation | Spectral voice analysis + prosody patterns | Degrades if short audio segments or heavy compression mask artifacts |

| Android emulator automation | Device attestation + emulator fingerprinting | Fails if onboarding apps accept virtual camera APIs without attestation |

JINKUSU CAM isn’t an isolated novelty: its distribution as a fraud kit links back to Starkiller phishing tools that used real‑time reverse proxies to harvest credentials. That lineage—phishing to synthetic identity kits—means fraudsters can combine credential reuse, stolen images, and deepfakes to scale money‑laundering workflows.

The same techniques affect banking call centers, insurance claims, HR screening, and media verification. Industry incidents like the Retool compromise (executive impersonation used to coax transfers) and the $5.5 billion “pig butchering” scams in 2024 show how deepfake‑adjacent fraud translates into large, cross‑sector financial losses and reputational damage.

Treat the critical threshold as the point where adversarial deepfakes routinely achieve sub‑50ms latency with synchronized audio and video; once that’s common, single‑factor liveness checks should be retired. In practice, platforms should require: (1) multi‑modal detection (face, voice, metadata, behavior), (2) device attestation that blocks virtual camera feeds, (3) cross‑session behavioral baselines, and (4) forensic document verification tied to independent registries.

Regulators and industry bodies—echoing WEF recommendations—need to define minimum testbeds and certification criteria for KYC vendors (including red‑teaming with InsightFace‑class generators). Monitor two checkpoints closely: broader deployment of certified multi‑modal detectors (dates and vendor certifications), and any regulatory mandates that require attestation or evidence‑level logging for onboarding flows.

When should an exchange escalate inspections? If vendor testing shows detection drops below the vendor’s live benchmark (e.g., Authenta AI’s >94%) or if penetration testing reproduces camera‑injection bypasses, escalate immediately.

Can users protect themselves? Individual protection is limited; users should prefer platforms enforcing hardware attestation and avoid reusing breached imagery across profiles.

Are false positives a concern? Yes—behavioral and multi‑modal systems can flag legitimate users, so exchanges must tune thresholds, maintain human review capacity, and track false‑positive rates over time.

Disclaimer: CryptoBetInsight.com is an informational website only and does not operate or provide any online gambling services. Availability of gambling services depends on the laws and regulations of your jurisdiction. Users are solely responsible for ensuring that their use of any external service complies with local laws and regulations.

Affiliate Disclosure: Some links on this website may be affiliate links. If you sign up or make a purchase through these links, we may earn a commission at no additional cost to you.

Legal Compliance: Users from the United States and other jurisdictions must comply with all applicable federal, state, and local laws regarding online gambling. Where applicable, users must meet the legal age requirements in their jurisdiction (commonly 21+).

Responsible Gambling: Please gamble responsibly and only wager what you can afford to lose. If you believe you may have a gambling problem, consider seeking help from a local support organization or a responsible gambling resource.