1

1 1

1

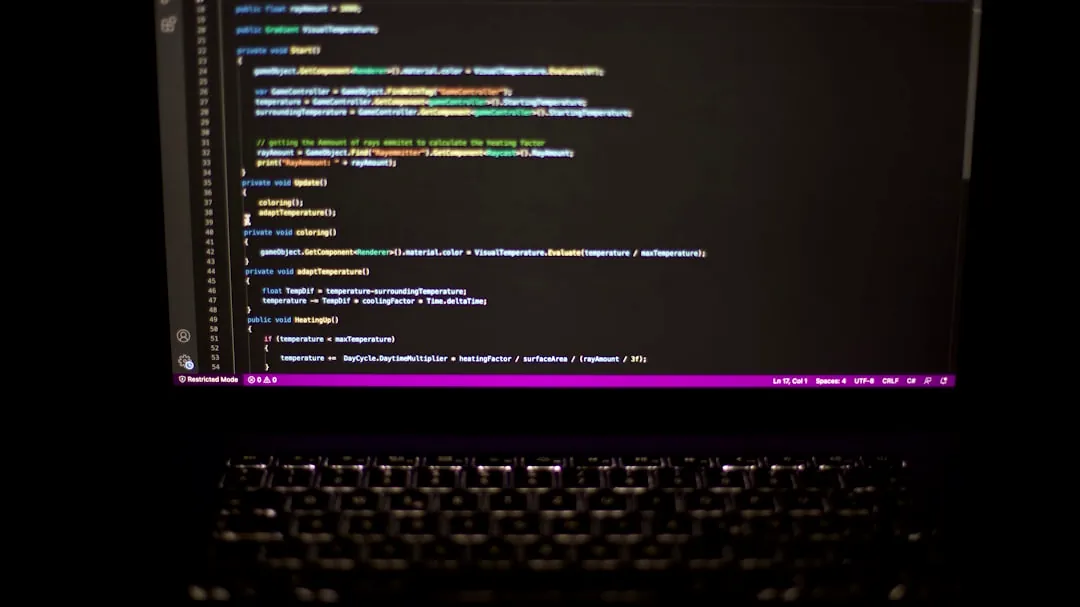

Tether’s new QVAC Fabric lets developers fine-tune and run billion-parameter language models on everyday devices—from a Samsung S25 to Apple Silicon and consumer AMD/Intel GPUs—by combining Microsoft’s BitNet 1-bit architecture with LoRA methods. That combination cuts VRAM needs dramatically (BitNet reports up to a 77.8% reduction versus 16-bit models), yields mobile-GPU inference that is 2–11× faster than on CPUs, and—for a concrete benchmark—fine-tuning a 1-billion-parameter model on a Samsung S25 takes about 1 hour 18 minutes while a 125M model can finish in roughly 10 minutes.

Beyond faster mobile inference, QVAC Fabric lowers the hardware barrier so individual developers and small teams can iterate on large models without renting cloud instances or buying datacenter-grade GPUs. The framework’s practical outcomes are immediate: shorter turnaround for local prototypes, the ability to embed advanced features directly into apps and wallets, and on-device model updates that keep sensitive data off centralized servers—an obvious fit for privacy-sensitive use cases like on-device wallet heuristics or federated analytics pilots.

The move also broadens which silicon is viable: QVAC supports Qualcomm Adreno, Apple Silicon, AMD and Intel GPUs as well as many mobile accelerators, not just NVIDIA. That hardware-agnostic stance is what makes this more than a performance tweak for phones—it’s an attempt to decentralize where AI compute happens and to erode the assumption that only specialized cloud instances (dominated today by NVIDIA in discrete-GPU sales) can handle advanced models.

BitNet’s 1-bit architecture reduces memory footprint by encoding weights more compactly; Tether pairs that with LoRA-style low-rank adaptation so fine-tuning modifies a small parameter subset instead of re-training full 16-bit models. The combination is what produces the reported 77.8% VRAM savings and makes billion-parameter models feasible on devices with limited VRAM and thermal budgets.

Practically, this means training and inference move from a single-architecture workflow (NVIDIA GPUs and CUDA) to a cross-platform toolchain. Tether’s implementation shows inference on mobile GPUs running 2–11× faster than CPU baselines and provides concrete timing data (1:18 for 1B on a Samsung S25). Still, those timings come from Tether’s tests; independent benchmarks and wider hardware coverage will be needed to confirm consistent performance across different devices and workloads.

Lower hardware cost and improved privacy come with trade-offs: centralized cloud APIs still win on standardized authentication, billing, and scale. Enterprises accustomed to API keys, quotas, and consolidated logging will find a decentralized, device-first deployment model harder to fit into existing DevOps and compliance pipelines. Tether must bridge that gap or risk slow uptake among larger customers.

Market dynamics also matter. NVIDIA’s grip on discrete datacenter GPUs—still north of 80% of sales—gives cloud providers entrenched incentives to optimize around CUDA and established data-center tooling. For QVAC Fabric to materially shift infrastructure demand, the framework needs broad hardware support, independent performance audits, and demonstrable cost comparisons in production settings rather than lab tests.

Use QVAC Fabric when privacy, low latency on-device features, or development cost constraints matter more than horizontal scalability and standardized enterprise integrations. For example, a crypto wallet team that needs real-time on-device inference and wants to avoid sending transaction data to a cloud model is a strong fit. Stick with centralized cloud GPUs if you need guaranteed multi-region availability, standardized billing, or to train models substantially larger than the device-memory-constrained sweet spot.

| Decision criterion | QVAC / Consumer-device route | Cloud / NVIDIA route |

|---|---|---|

| Typical latency | Low for local inference; ideal for real-time on-device features | Higher network latency; better for centralized pipelines |

| Cost for early prototypes | Lower—uses existing consumer hardware | Higher—instance hours and GPU rental fees |

| Model size practicality | Up to billion-parameter range with 1-bit + LoRA; measurements like 1B in 1:18 on S25 | Easier for very large models and multi-GPU training |

| Enterprise integration | Requires new workflows for auth, billing, and logging | Mature APIs, authentication, and compliance tooling |

| Privacy and data controls | Strong—on-device and federated learning options | Requires careful architecture to avoid sending raw data to cloud |

Q: What benchmarks should I request? Ask for device-level end-to-end timings (fine-tune and inference) on the exact hardware you plan to use, plus memory and battery profiles. Independent third-party runs are the most credible.

Q: How quickly should I expect broader hardware support? Watch for updates over the next 3–12 months: Tether’s next checkpoints are expanded GPU compatibility and on-device federated-learning pilots that would signal wider readiness.

Q: When should I pause adoption? If you require multi-region scaling, formal enterprise billing, or must run models far larger than the reported device-capable range, prefer cloud GPUs until independent benchmarks and integration tooling mature.

Disclaimer: CryptoBetInsight.com is an informational website only and does not operate or provide any online gambling services. Availability of gambling services depends on the laws and regulations of your jurisdiction. Users are solely responsible for ensuring that their use of any external service complies with local laws and regulations.

Affiliate Disclosure: Some links on this website may be affiliate links. If you sign up or make a purchase through these links, we may earn a commission at no additional cost to you.

Legal Compliance: Users from the United States and other jurisdictions must comply with all applicable federal, state, and local laws regarding online gambling. Where applicable, users must meet the legal age requirements in their jurisdiction (commonly 21+).

Responsible Gambling: Please gamble responsibly and only wager what you can afford to lose. If you believe you may have a gambling problem, consider seeking help from a local support organization or a responsible gambling resource.